Zig vs Rust in 2026

Nearly 3 years ago, before coding agents, I wrote a bytecode VM and garbage collector in Zig and unsafe Rust, and felt that the human ergonomics of writing unsafe code was in Zig’s favor.

Unfortunately, I’m writing less and less code by hand nowadays, meaning I have less and less reasons to use Zig now. Every 1.5-5x human DX productivity boost from Zig features is eclipsed by the 100x boost from coding agents in Rust.

Many of Zig’s greatest features were designed for human ergonomics, but this doesn’t really matter that much to agents.

I’ll talk about my favorite Zig features and why they don’t matter that much anymore.

Allocator interfaceλ

This is my favorite Zig feature, you feel so galaxy brain using a specialized allocator to optimize a code path (e.g. arena, stack fallback etc).// A real example:

// Reading a line of user input requires a heap allocator because

// in theory the input length is unbounded. In practice though,

// the input is almost always a short search query or path that

// fits in well under 1kb.

//

// stackFallback puts a fixed-size buffer on the stack and only

// hits the heap when the input overflows it. The common case

// costs zero heap allocations. The rare long input still works

// because the allocator silently upgrades to the heap.

var stack_fallback = std.heap.stackFallback(256, heap_allocator);

const alloc = stack_fallback.get();

const line = try reader.readUntilDelimiterAlloc(alloc, '\n', 4096);

defer alloc.free(line);

The problem in Rust used to be that there was no Allocator interface equivalent

and if you wanted a Vec<T> that used a custom allocator you literally had to

copy+paste the std version and modify it to use it (this is what Bumpalo did,

look at the source, the collections are forks of the std versions wired up

to the bump allocator).

For a long while now there has been an Allocator trait in nightly, and it

seems to be good now. Because it is a trait it is static dispatch, vs Zig’s

which is based on a vtable.

Unlike Zig there isn’t a community-wide convention of designing data structures to be parametric based on the allocator, but AI changes the game and makes it trivial to copy paste code and change that. I find it works well enough for my use-case.

Arbitrary bit width integers + packed structsλ

Another beloved Zig feature of mine. It makes it so easy to do DOD-style CPU cache optimizations and stuff like tagged pointers, NaN boxing, etc. and even made bitflags really easy to make.Here’s a real example. When using Obj-C APIs like Metal through the

Obj-C runtime C API, an id can be a tagged pointer instead of an aligned

heap object pointer. I hit UB by passing a tagged NSNumber through code that

assumed alignment.

So you need a cheap “heap pointer vs tagged immediate” check. Here’s a simplified Objective-C tagged pointer layout: one low bit says “not a heap pointer”, the next 3 bits identify the class slot, and the remaining 60 bits are payload:

pub const TaggedClass = enum(u3) {

ns_atom = 0,

ns_string = 1,

ns_number = 2,

ns_date = 3,

};

pub const ObjcTaggedPointer = packed struct {

is_tagged: bool = true,

class: TaggedClass,

payload: u60,

pub fn ns_number(n: u60) ObjcTaggedPointer {

return .{ .class = .ns_number, .payload = n };

}

pub fn from_raw(raw: u64) ObjcTaggedPointer {

return @bitCast(raw);

}

pub fn raw(self: ObjcTaggedPointer) u64 {

return @bitCast(self);

}

pub fn is_ns_number(self: ObjcTaggedPointer) bool {

return self.is_tagged and self.class == .ns_number;

}

};

The Rust equivalent OR-s the Objective-C class slot in on construction and masks

it out on every access. The slot is just a u64 constant, not a real type:

pub struct ObjcTaggedPointer(u64);

impl ObjcTaggedPointer {

const TAG_MASK: u64 = 0b1;

const CLASS_MASK: u64 = 0b1110;

const CLASS_SHIFT: u64 = 1;

const PAYLOAD_SHIFT: u64 = 4;

const CLASS_NS_ATOM: u64 = 0;

const CLASS_NS_STRING: u64 = 1;

const CLASS_NS_NUMBER: u64 = 2;

const CLASS_NS_DATE: u64 = 3;

pub fn ns_number(n: u64) -> Self {

Self(

(n << Self::PAYLOAD_SHIFT)

| (Self::CLASS_NS_NUMBER << Self::CLASS_SHIFT)

| Self::TAG_MASK,

)

}

pub fn is_ns_number(self) -> bool {

self.0 & Self::TAG_MASK != 0

&& (self.0 & Self::CLASS_MASK) >> Self::CLASS_SHIFT == Self::CLASS_NS_NUMBER

}

}

You can see the native Rust way is unergonomic. You’re actually better off using some crate like bitfield/bitflags which both rely on proc macro magic to work, and I don’t find as nice as Zig’s packed structs.

However, with coding agents I literally do not care how annoying it is to write the code by hand.

Comptimeλ

This is Zig’s flashiest feature, no other programming language except maybe for obscure dependent-types langs have compile time evaluation as nice as Zig’s.I thought I would miss it a lot, but I actually don’t. For me, 95% of comptime usage is to create Zig’s version of generic data structures with parametric types, e.g.:

fn ArrayList(comptime T: type) type {

return struct {

items: []T,

capacity: usize,

allocator: Allocator,

};

}

const IntList = ArrayList(i32);

Rust has a better designed type system IMO (see next section).

In the remaining 5% of cases, not having comptime sucks. The only reliable way to reach an equivalent is through codegen. I’m making a game right now, and I have hardcoded hitbox geometry data generated from a tool that I want to bake into a data structure. Without comptime, I have to get Claude to write a script that generates the Rust file. However, I don’t find myself needing compile time evaluation that much anyway.

Rust’s type systemλ

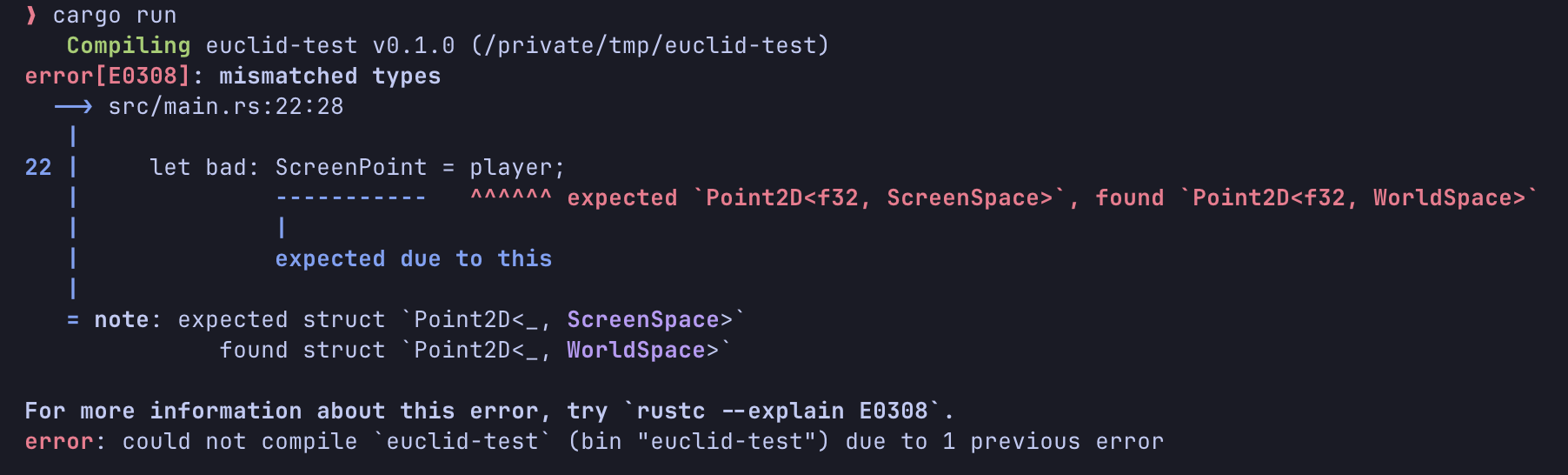

I think I’d rather trade having comptime for Rust’s better-designed type system, especially for bounded polymorphism (traits/typeclasses). Trying to do the equivalent in Zig is a nightmare.Also, I think that Rust’s type system allows you to enforce more invariants and

prevent coding agents from making common mistakes. In my game I use the

euclid crate which essentially allows you to not mix up coordinate spaces (very

common problem in graphics programming) by creating specialized types for each

coordinate space (e.g. Point<Screen> or Point<World>):

use euclid::{point2, vec2, Point2D, Translation2D, Vector2D};

struct WorldSpace;

struct ScreenSpace;

type WorldPoint = Point2D<f32, WorldSpace>;

type WorldVector = Vector2D<f32, WorldSpace>;

type ScreenPoint = Point2D<f32, ScreenSpace>;

fn main() {

let player: WorldPoint = point2(10.0, 20.0);

let movement: WorldVector = vec2(5.0, 0.0);

// Allowed: point + vector in the same space.

let moved_player = player + movement;

// Allowed: explicitly convert from world space to screen space.

let world_to_screen = Translation2D::<f32, WorldSpace, ScreenSpace>::new(100.0, 50.0);

let player_on_screen: ScreenPoint = world_to_screen.transform_point(moved_player);

// Disallowed: can't mix coordinate spaces.

let bad: ScreenPoint = player;

}

This lets agents not make the stupid mistake of mixing world space coordinates with screen space coordinates:

Not having to deal with memory issuesλ

With coding agents allowing 100x more code to be written, this also means you need to scrutinize 100x more Zig code for memory issues. Without formal verification, the surface area of the search space to enumerate to find bugs is just so much larger now.With the magnitude of code being generated now, Rust is even more attractive. Rust’s tradeoff was always that it hinders developer productivity especially if you are unfamiliar with the borrow checker, but this simply does not matter with coding agents anymore.

And if you do use unsafe in Rust there’s tools like miri which you can have

the coding agent run the code against to make sure it doesn’t cause UB or isn’t

violating Rust’s aliasing rules when it comes to unsafe.